China’s AI Race: Apps Shine, but Core Models Still Lag Behind

A recent Epoch AI report finds that Chinese large‑language models are, on average, about seven months behind their U.S. counterparts—a gap that widens to 14 months when new closed‑source U.S. models debut and narrows to four months during rapid open‑source bursts. The United States keeps its lead by releasing updates almost continuously and by focusing on training‑paradigm breakthroughs such as new reasoning‑path designs, rather than just scaling model size. Chinese developers, by contrast, have followed a “leapfrog” pattern—big jumps from Baichuan 2 to Qwen‑14B, Yi‑34B, DeepSeek‑V2, and Qwen 3 Max—mostly driven by larger parameters, mixture‑of‑experts architectures, and aggressive engineering tweaks.

To close the gap, Chinese firms are turning to system‑level innovations. Huawei’s CloudMatrix links hundreds of chips into a single “super‑AI server,” while Sugon’s ScaleX cluster coordinates 10,000 GPUs for cross‑node training, emphasizing communication efficiency and resource scheduling over raw chip speed. Low‑precision FP8 computing shows that algorithm‑hardware co‑design can boost training efficiency without the latest process nodes.

Despite these advances, a shortage of high‑end compute remains the biggest obstacle. Training compute for China’s top models has slowed to roughly three‑fold annual growth, far behind the five‑fold pace elsewhere, meaning it could take several years to match global leaders. Industry insiders at the AGI‑Next Frontier Summit warned that the compute gap may even be widening, limiting how quickly foundational models can improve. In short, while applications like OpenClaw demonstrate China’s growing AI talent, the country still needs stronger hardware foundations to keep pace with the U.S.

Read more

Living ‘Neuro‑Bots’ Made from Frog Cells Build Their Own Nerves and Switch Genes On and Off

Scientists at Harvard’s Wyss Institute have taken tiny, frog‑cell robots a step further by giving them a self‑forming nervous system. The new “neuro‑bots” start life as biobots—microscopic, self‑powered machines built entirely from skin cells taken from Xenopus laevis frog embryos. In a breakthrough experiment, researchers implanted early‑stage neuronal precursor cells into these biobots just minutes after they formed. Within hours, the cells organized themselves into a primitive nervous network, and the robots began to change the activity of several genes as they matured.

The team says this is the first time a living robot has been coaxed to grow its own nerve tissue, opening a fresh window onto how cells communicate, develop, and respond to their environment. "Neuro‑bots defy previous scientific thinking and open a new frontier in biomedical research," said Donald Ingber, director of the Wyss Institute. While still in early stages, the technology could eventually help scientists study brain development, test drug effects, or even inspire new medical devices that repair damaged tissue from the inside out.

Read more

China’s Robots Near Human‑Level Skills – From Marathon Runs to Tennis Matches

China’s “embodied intelligence” – robots that can move, sense and decide like people – is closing the gap with the United States. In a 5,000‑square‑metre training centre in Beijing, more than 120 robots practice everyday tasks such as picking avocados, sorting groceries and even changing a baby’s diaper. The centre has recreated over 30 real‑world settings – homes, supermarkets and offices – creating the country’s largest robot‑training matrix.

Recent showcases have stunned viewers: a humanoid robot sprinted a half‑marathon in 2 hours 40 minutes, a tennis‑playing robot rallied with a human for 20 shots, and another performed high‑speed box‑flipping and one‑handed tricks on live TV. These feats are powered by a new “motor cerebellum” algorithm that learns motion patterns from scattered human data, eliminating the need for costly motion‑capture rigs.

Industry leaders say the biggest hurdle is generalisation – robots still struggle outside pre‑programmed scenarios. To overcome this, China is flooding the field with massive, high‑quality data (over two million downloads of the open‑source Robomind dataset) and developing smarter algorithms that can handle unfamiliar environments. Partnerships with top universities and firms like NIO and China Shipbuilding Group aim to turn these advances into everyday tools, bringing truly adaptable, human‑level robots closer to reality.

Read more

Why Lithium‑Ion Batteries Crack: New Real‑Time Images Reveal the Hidden Problem

A research team at the University of Houston has captured the first real‑time pictures of tiny, needle‑like lithium structures—called dendrites—breaking inside solid‑state batteries while they charge and discharge. Using a special microscope that can watch the battery’s interior as it works, professor Yan Yao and his colleagues saw the dendrites snap like brittle glass, whether they formed in liquid or solid electrolytes. This observation confirms that the fragile nature of these dendrites is a fundamental issue, not just a quirk of a particular battery design.

The findings help explain why some next‑generation batteries lose capacity or fail unexpectedly, a problem that has long puzzled engineers. By visualizing the fracture process as it happens, the study provides a clear target for scientists aiming to design tougher, longer‑lasting batteries for electric cars, smartphones, and renewable‑energy storage.

Read more

China’s BeiDou: From Remote Mountains to Moon‑Guiding Navigation

Lin Baojun and his 81‑person team have turned China’s BeiDou satellite system into a global navigation powerhouse in just over three years—a feat that took the U.S. GPS two decades. Instead of building hundreds of ground stations, they let the satellites talk to each other in space using a Ka‑band phased‑array link, creating a “WeChat‑style” network that works even over foreign territory. The first experimental BeiDou‑3 satellite launched in 2015, and by 2020 the full constellation was orbiting the Earth, delivering ultra‑precise timing and positioning to everything from drone patrols on Laojun Mountain to robot dogs playing with children.

Key to the system’s accuracy are hydrogen‑maser clocks aboard more than 130 satellites, the most precise timepieces ever placed in space. When a dual‑satellite mission faced a cascade of failures—lost control, broken solar panels, and a missed lunar capture window—Lin’s team performed emergency fixes, re‑calculated orbits, and ultimately succeeded, proving that even “impossible” challenges can be overcome.

Today BeiDou underpins smart agriculture, port logistics, low‑altitude commerce and, soon, lunar navigation. Lin envisions a future where a phone’s map app guides astronauts from Earth to the Moon without any special equipment, rewriting humanity’s coordinate system one satellite at a time.

Read more

Breakthrough in All‑Electrical Spin‑Chip Writing: 5× Boost Using Copper‑doped Platinum

Researchers at the Institute of Semiconductors, Chinese Academy of Sciences, have announced a major step forward for the next generation of spin‑based computer chips. By mixing copper into platinum to create a Pt‑Cu alloy, they amplified the vertical spin‑torque effect more than five times. This improvement allowed them to flip magnetic bits (the “0” and “1” states) using only an electric current—no magnetic field needed—and with a perfect 100 % success rate.

The team built the devices on a standard 4‑inch silicon wafer, using a high‑anisotropy FeCoB layer that keeps the magnetic bits stable at high temperatures. Remarkably, the current needed to switch the bits was just 1.8 × 10⁷ A/cm², the lowest ever reported for an all‑electrical, CMOS‑compatible process.

These vertical spin‑orbit torque chips promise faster speeds and far lower power consumption than today’s silicon transistors, addressing the physical limits that conventional chips are hitting. The findings were published in *Advanced Functional Materials* and are backed by China’s Ministry of Science and Technology, the National Natural Science Foundation, and Beijing municipal funds. If scaled up, this technology could become a core component of future high‑density, energy‑efficient processors from industry leaders such as Intel, Samsung and TSMC.

Read more

Deep‑Sea Proteins Promise Faster, More Accurate Disease Tests

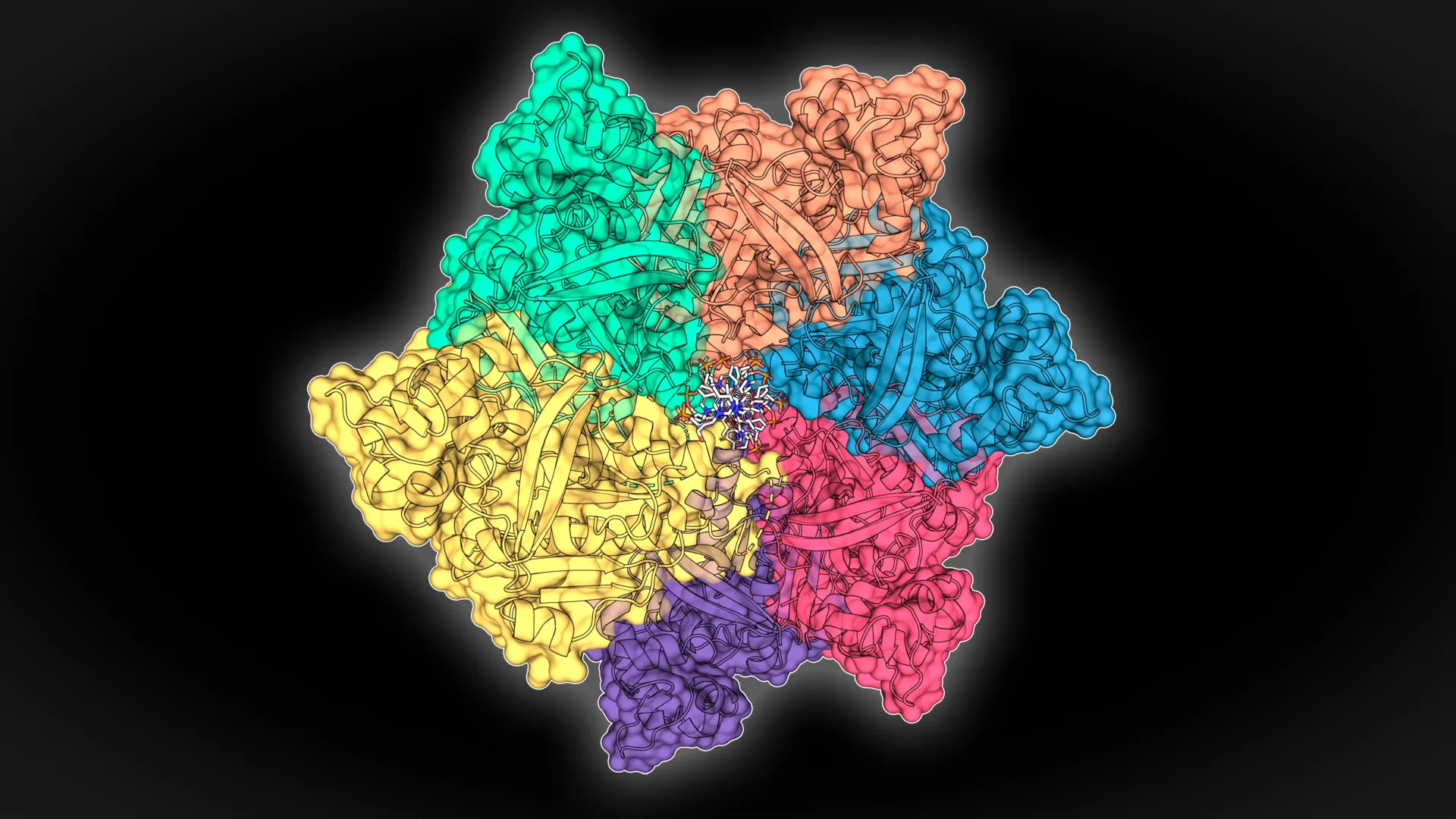

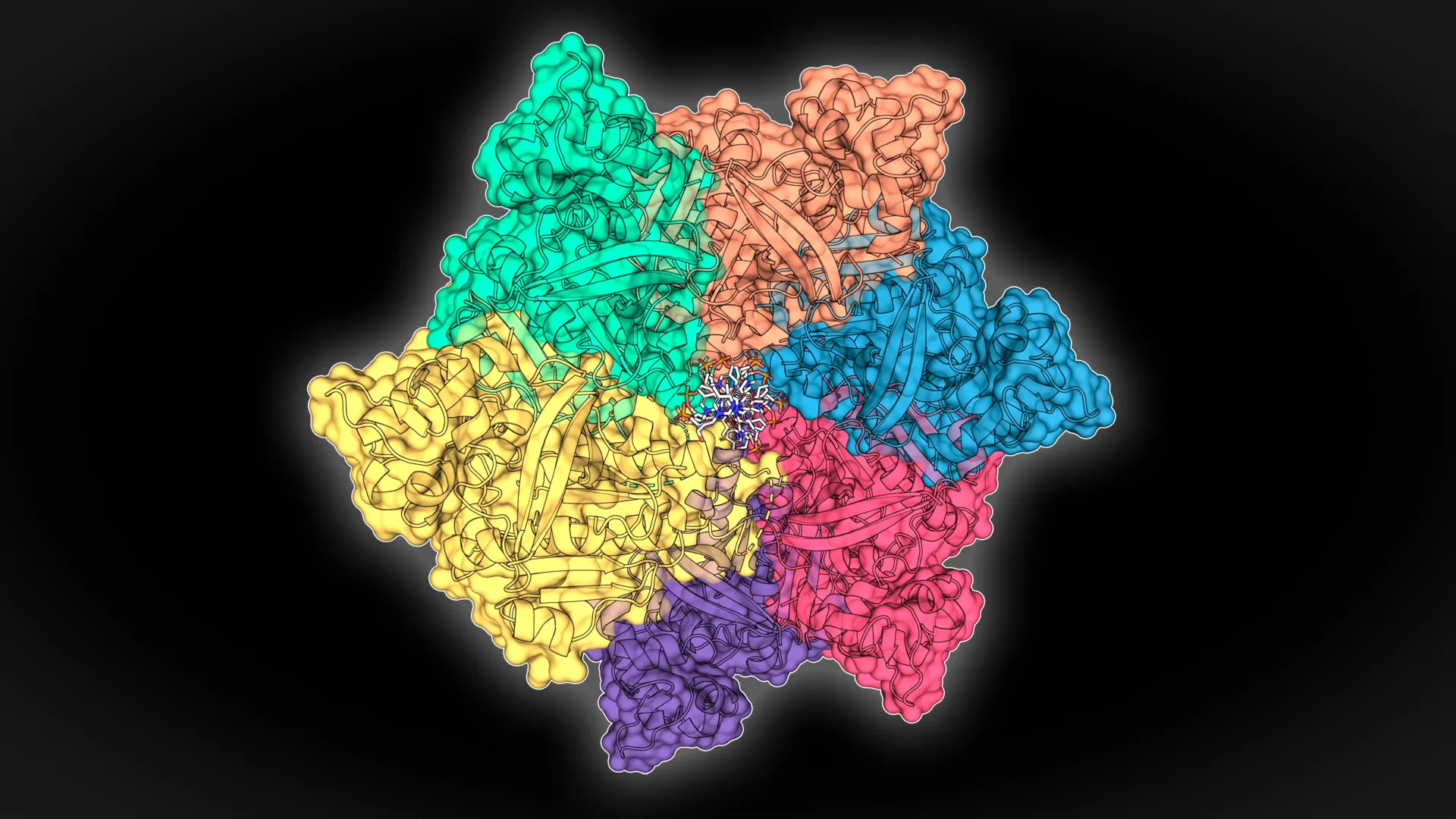

Scientists at Durham University have uncovered a new class of ultra‑tough proteins living in some of Earth’s most hostile places – volcanic lakes and deep‑sea vents. These proteins are built to cling to DNA even when temperatures soar, salt levels spike, or chemicals get aggressive. By mining massive genetic databases, the researchers identified several candidates that stay stable under extreme conditions.

One of these newly discovered proteins turned out to be a game‑changer for rapid diagnostic tools that use a technique called LAMP (Loop‑mediated Isothermal Amplification). When added to the test, the protein makes the reaction run faster and detect far smaller amounts of viral or bacterial genetic material. In practical terms, doctors could get results in minutes rather than hours, and catch infections that might otherwise slip through the cracks.

Read more

How AI Is Revolutionizing the Study of Complex Systems – From Fruit‑Fly Brains to Weather Forecasts and Social Simulations

Scientists are now looking at the world as a web of interacting parts instead of isolated pieces, and artificial intelligence is the catalyst for this shift. In aging research, for example, the focus has moved from single genes to the whole network of biological interactions that drive the aging process. A striking illustration comes from the FlyWire project, where AI‑powered image recognition turned a task that would have taken 50,000 person‑years into a 33‑year verification effort, fully mapping the fruit‑fly brain’s connections.

Weather prediction, another classic complex system, benefits from massive sensor data combined with deep‑learning models that complement traditional physics‑based approaches, delivering far more accurate forecasts. In the social sciences, large language models now act as realistic “agents” in simulations, mimicking human psychology and decision‑making, which makes virtual societies far more lifelike and useful for policy testing.

Beyond applications, AI itself shows hallmarks of complex systems: sudden “emergent” abilities appear once models reach a certain size, and performance improves predictably as data and parameters scale. Researchers are even borrowing physics equations to describe how AI learns, and using network and causal‑analysis tools to peek inside black‑box models. In short, AI is both a powerful new lens for studying complex phenomena and a complex system that teaches us fresh ways to build smarter, more interpretable technologies.

Read more