Boosting AI Speed: New Tricks That Make Large Language Models Run 4× Faster

A recent deep‑tech roundup dives into how researchers are squeezing dramatic performance gains out of today’s multimodal generative AIs—think text‑to‑image, speech translation, code generation and recommendation engines. By profiling the hardware demands of these models, the team pinpointed three main bottlenecks: compute intensity, memory bandwidth, and shifting input distributions. They then applied a suite of cutting‑edge optimizations: torch.compile and CUDA Graph to streamline GPU execution, Flash Attention (SDPA) to accelerate the Transformer’s core attention math, aggressive quantization to boost computational density, and a novel LayerSkip speculative decoding technique that skips unnecessary layers during generation. When combined, these tricks delivered an average 3.88× speed‑up in inference across a range of tasks, with especially large gains in small‑batch scenarios. For example, the Chameleon text‑to‑image model saw its runtime cut dramatically, while Seamless’s speech‑translation pipeline approached real‑time performance. The findings suggest that, without redesigning the underlying models, software‑level engineering can make large language models far more practical for everyday applications—from interactive chatbots to on‑device AI services—while keeping hardware costs and power consumption in check.

Read more

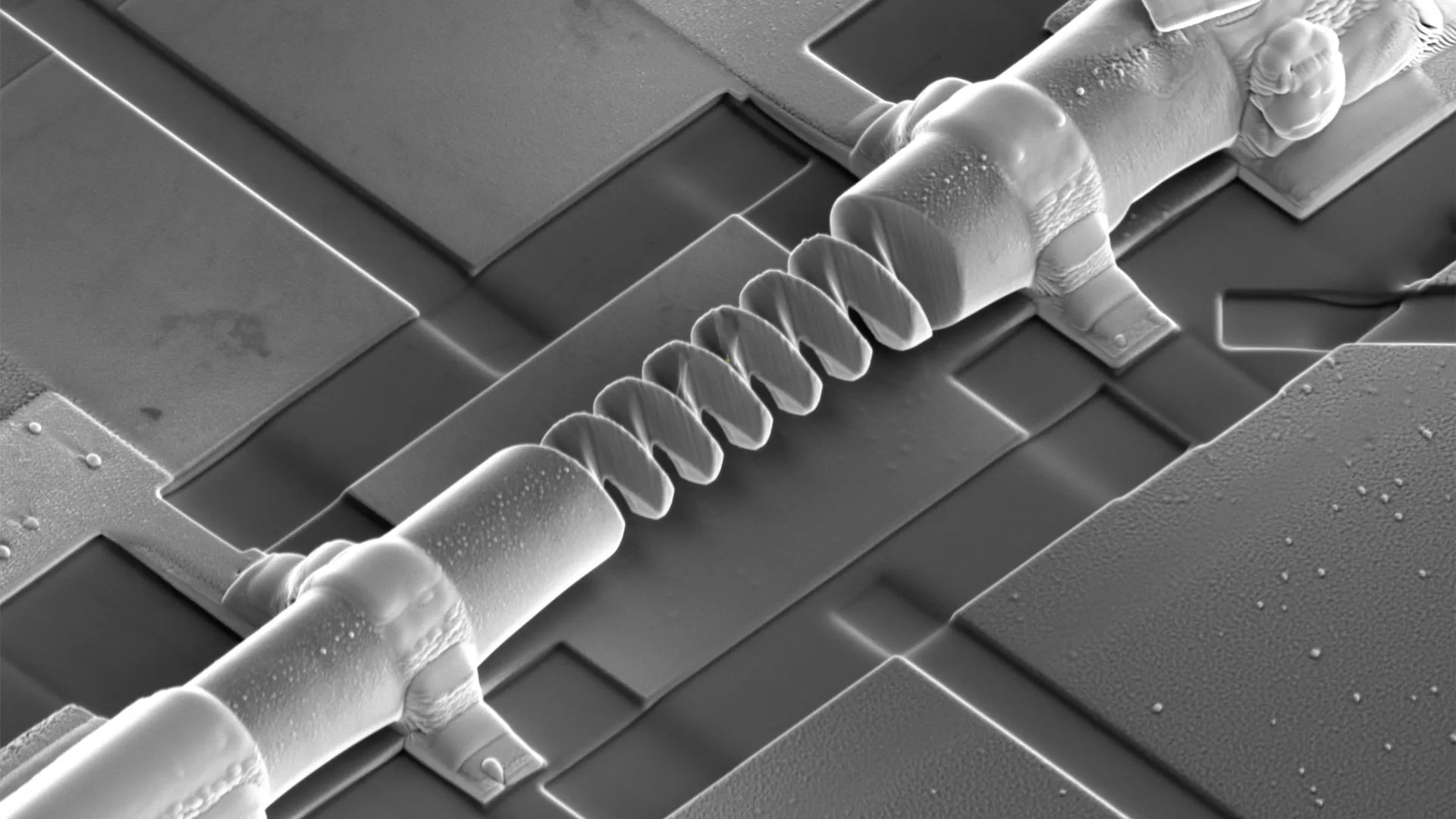

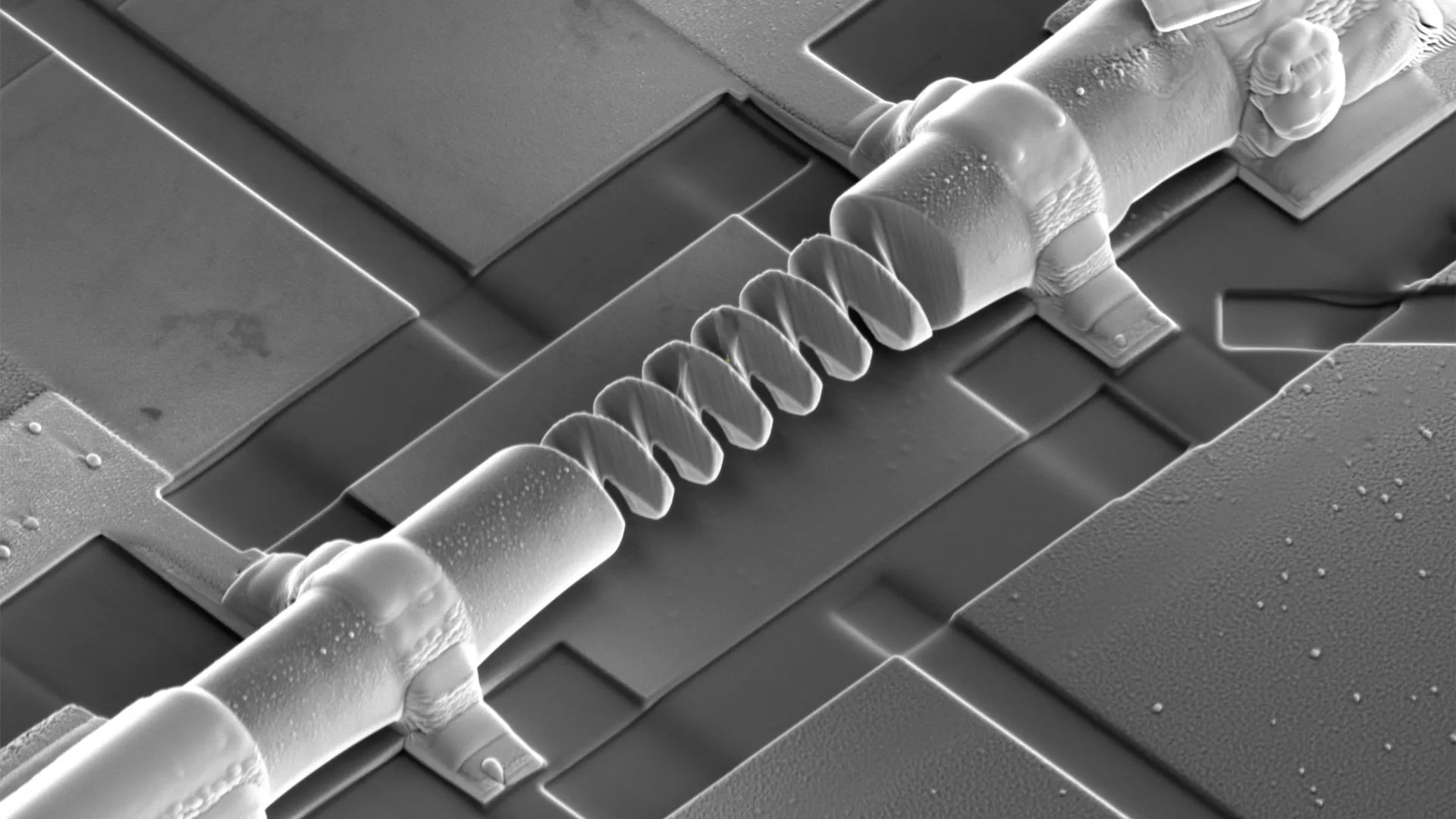

Twisting Tiny Crystals Turns Them Into Switchable Nano‑Diodes

A team of researchers at Japan’s RIKEN institute has unveiled a new way to shape tiny electronic components straight out of single‑crystal materials. By carving three‑dimensional helices—tiny spiral structures—from a magnetic crystal, they discovered that the shape itself can dictate how electricity flows. In these nano‑helices, electric current prefers to travel in one direction, acting like a diode, but the preferred direction can be flipped simply by changing the crystal’s magnetization or by twisting the helix a different way. This breakthrough shows that geometry, not just material composition, can be used as a design tool for future electronics. The scientists say the method could open doors to novel devices that blend exotic electronic states with engineered curves, potentially boosting the performance of memory chips, logic circuits, and sensors. While still in the laboratory, the approach hints at a future where the shape of a material becomes as important as its chemistry in building faster, more efficient, and more versatile electronic technologies.

Read more

China’s AI Leap: From Chatbots to Smart Agents in 2026

China’s artificial‑intelligence scene is moving from a frantic "hundred‑model war" to a focused sprint on real‑world usefulness. Tech giants such as Tencent have already embedded their home‑grown large models into more than 900 internal tools, turning AI into a daily productivity aid. Meanwhile, Baidu has split its research into foundational and applied teams, acknowledging that only a handful of core models will survive, but countless niche applications will flourish.

Industry reports show the number of foundational models is shrinking while their performance in specific sectors—healthcare, enterprise deployment, robotics—soars. The new buzzword is "agentic AI": systems that can set goals, plan steps, learn from mistakes and remember context, much like a digital butler rather than a simple chatbot. Researchers stress that these agents still need better reliability and long‑term memory before wide adoption.

A parallel technical race is underway. Companies like DeepSeek are championing lighter, more efficient architectures that achieve higher "intelligence density"—more capability per unit of compute and data. This shift from sheer scale to smarter, sparser attention mechanisms promises cheaper, faster AI.

Statistically, China now hosts over 6,000 AI firms, a core AI market worth more than 1.2 trillion yuan, and holds 60 % of global AI patents. The 2026 Five‑Year Plan will weave AI into industry, culture, public services and governance, turning the technology from a novelty into an all‑purpose engine for the nation’s next growth chapter.

Read more

China Launches Satellite Super‑Computers to Run AI in Space

China is turning the sky into a giant data center. In a push to move computing out of ground‑based labs and into orbit, the country is building a network of thousands of “computing satellites” that can process information right above us. At a recent seminar, Guoxing Aerospace unveiled its ambitious “Star Computing” roadmap, which envisions 2,800 satellites working together to support AI agents on land, sea, air and space. The plan already scored a milestone: in November 2025 the company uploaded the Qwen‑3 large‑language model to an orbiting satellite, making it the first time a general‑purpose AI was run in space. The model answered ground‑based queries and sent results back in under two minutes, proving that on‑orbit inference is not just a dream. Meanwhile, China Power Construction launched “Power Construction No.1,” the nation’s first satellite dedicated to monitoring energy infrastructure. Equipped with an X‑band synthetic‑aperture radar, the satellite can see through clouds and rain to deliver precise, all‑weather images of pipelines, power lines and other critical assets, solving long‑standing gaps in coverage and accuracy. Together, these initiatives signal a new era where space becomes a fast, reliable partner for AI, industry and national security.

Read more