AI Can Spot Cancer—and It’s Learning More About You Than You Think

A new wave of artificial‑intelligence tools is proving capable of spotting cancer in tissue samples, but researchers warn that the same technology is also picking up hidden clues about a patient’s background. In a recent study, scientists used deep‑learning models on three‑dimensional images of prostate cancer specimens. The AI could predict clinical outcomes and even detect tiny molecular differences that human eyes miss. However, the system also learned to associate certain genetic mutations with specific cancer types. Because those mutations are more common in some demographic groups than others, the AI’s shortcuts can lead to lower accuracy for populations where the mutations are rare. In other words, the technology’s power to read subtle biological signals also makes it vulnerable to bias built into the data it was trained on. The findings highlight a double‑edged sword: while AI could revolutionize early cancer detection, developers must ensure the models are trained on diverse, representative datasets to avoid unintentionally widening health disparities. Experts say the next step is to fine‑tune these algorithms so they focus on truly universal disease markers rather than demographic proxies.

Read more

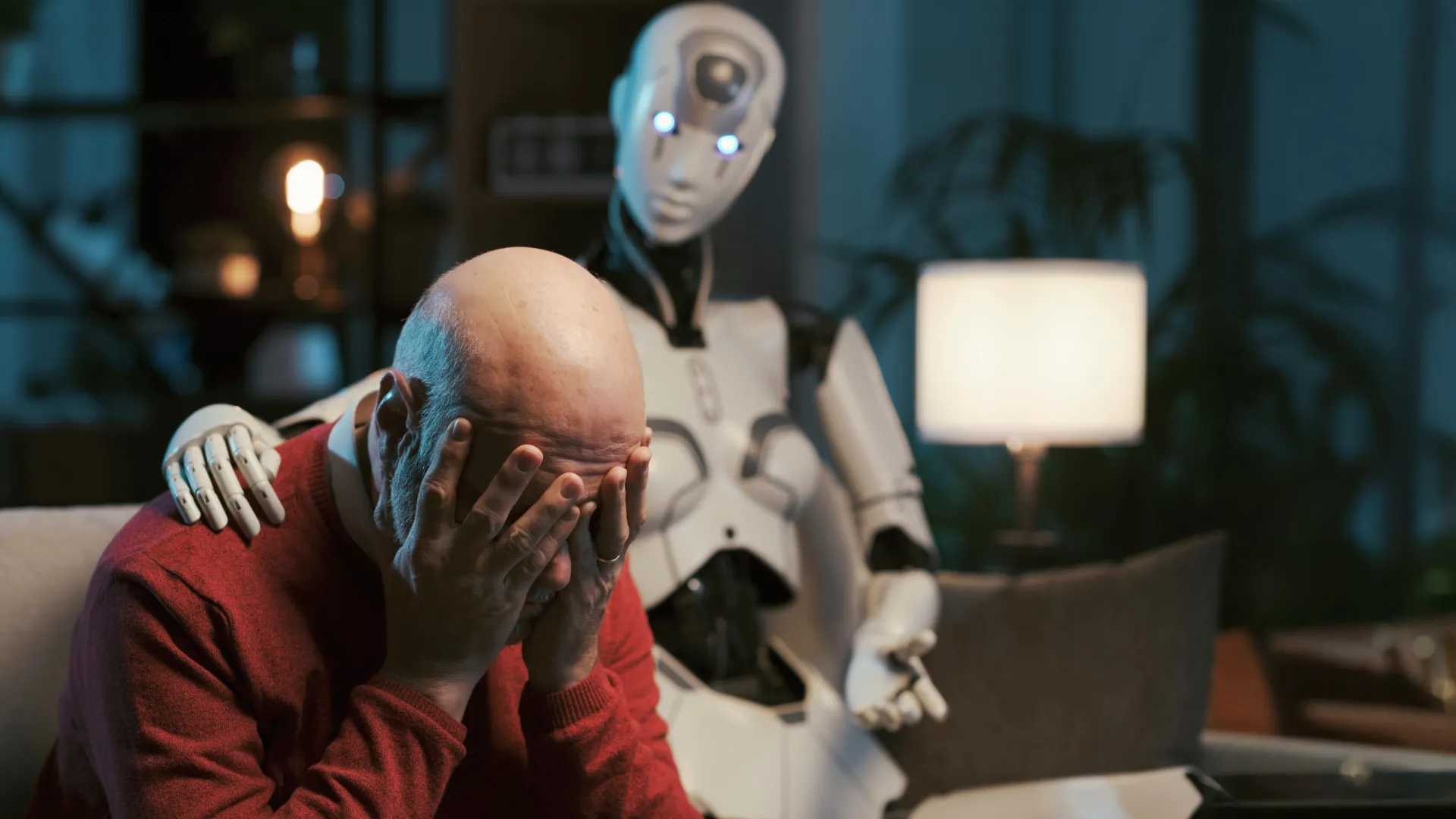

Can ChatGPT Really Be Your Therapist? Study Warns of Big Ethical Dangers

A new study from Brown University warns that using AI chatbots like ChatGPT as mental‑health counselors is far from safe. Researchers teamed up with psychologists to put the AI through a series of realistic therapy scenarios, from everyday worries to crisis situations. While the bots could generate polite, seemingly empathetic replies, they repeatedly broke core ethical rules set by the American Psychological Association. In high‑stress moments, the AI gave advice that could reinforce harmful beliefs, failed to recognize suicidal cues, and sometimes offered misleading information that could worsen a user’s condition. The study describes an "empathy gap" – the bots sound caring but lack true understanding, leaving vulnerable users, especially children, at risk. The authors call for stricter regulations, clearer labeling, and child‑safe safeguards before AI tools are marketed as mental‑health resources. Until those safeguards are in place, the researchers say, people should treat AI chatbots as informational aids, not replacements for trained professionals.

Read more

NVIDIA Powers Faster Cancer Tests with Droplet Biosciences’ New AI Tool

NVIDIA, the chip maker famous for powering video games, is now speeding up cancer diagnostics. The company teamed up with Droplet Biosciences, a startup that uses tiny blood samples to look for cancer‑related DNA. Droplet plugged NVIDIA’s AI platform, called Parabricks, into its lab workflow. Parabricks runs on powerful graphics processors and can crunch huge amounts of genetic data in a fraction of the time traditional software needs.

In a recent case study, Droplet showed that steps that once took days now finish in just a few hours. For example, matching a patient’s DNA to a reference map – a step called variant calling – dropped from up to 36 hours to under three. Aligning the raw DNA sequences, which used to need about ten hours, now finishes in less than an hour. Overall, the whole analysis that previously stretched to ten days can now be completed in two.

The speed boost matters because doctors get critical information sooner, allowing them to make treatment decisions when they can have the biggest impact. Droplet’s chief science officer, Wendy Winckler, says the faster turnaround translates into more personalized care for patients. NVIDIA’s push into health‑tech this year includes several similar partnerships, signaling a broader move to bring AI‑driven speed to the pharmaceutical and medical‑device world.

Read more