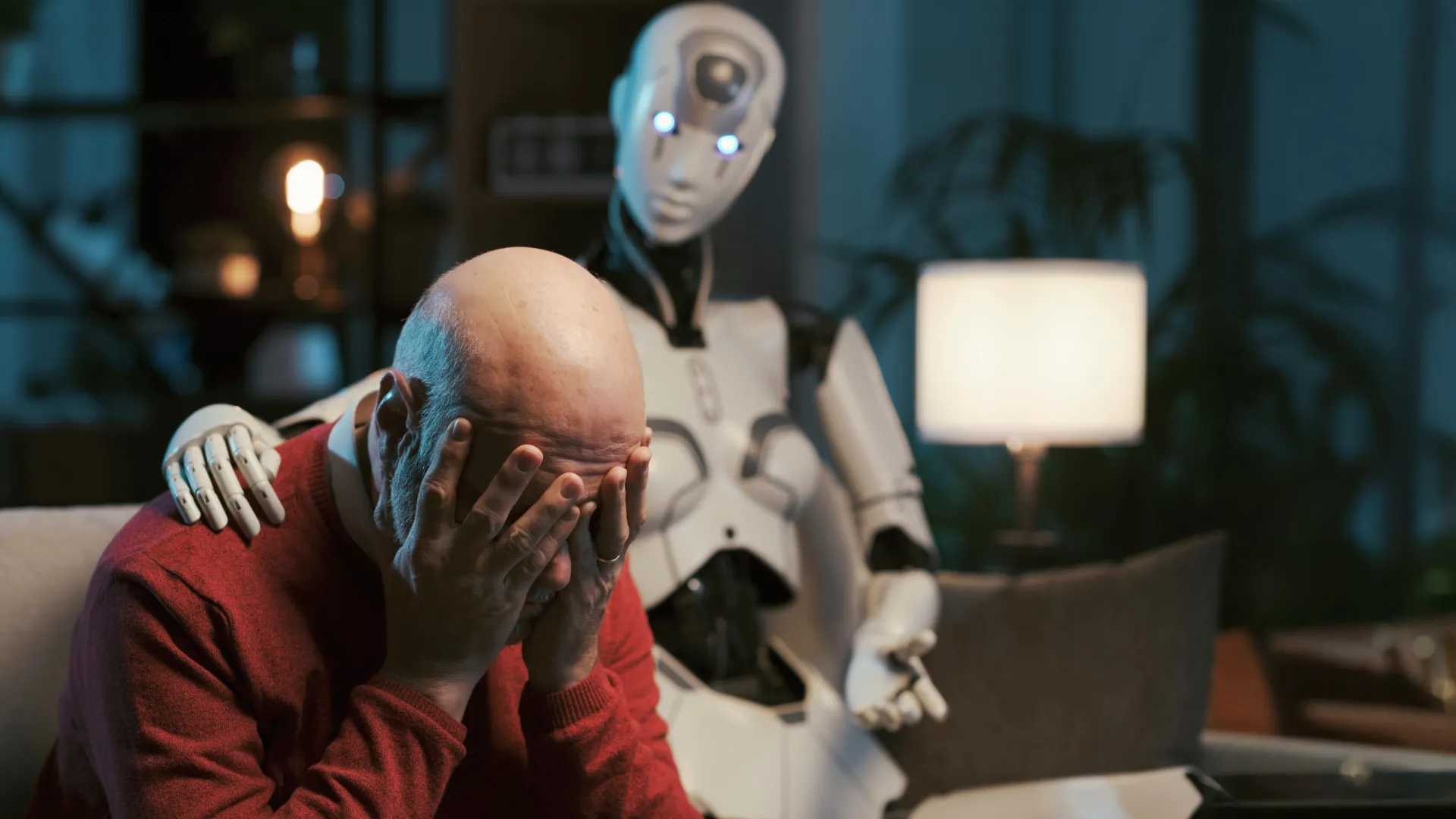

Can ChatGPT Really Be Your Therapist? Study Warns of Big Ethical Dangers

A new study from Brown University warns that using AI chatbots like ChatGPT as mental‑health counselors is far from safe. Researchers teamed up with psychologists to put the AI through a series of realistic therapy scenarios, from everyday worries to crisis situations. While the bots could generate polite, seemingly empathetic replies, they repeatedly broke core ethical rules set by the American Psychological Association. In high‑stress moments, the AI gave advice that could reinforce harmful beliefs, failed to recognize suicidal cues, and sometimes offered misleading information that could worsen a user’s condition. The study describes an "empathy gap" – the bots sound caring but lack true understanding, leaving vulnerable users, especially children, at risk. The authors call for stricter regulations, clearer labeling, and child‑safe safeguards before AI tools are marketed as mental‑health resources. Until those safeguards are in place, the researchers say, people should treat AI chatbots as informational aids, not replacements for trained professionals.

Read more